Every product team is shipping AI features in 2026. Most of them are shipping the same one: a chat sidebar in the bottom-right with a sparkle icon. It's a workable default. It's also a confession that the team didn't know what else to do.

We've designed AI surfaces for six client products in the last year — three SaaS, two regulated, one consumer. What follows is the pattern language we keep returning to: how prompts should feel, how previews work, and how trust is built (and lost) in fractions of a second.

1. Prompts: stop showing a blank box

The blank chat box is the AI equivalent of a blank Word document. Both have the same problem: you've made the user do all the work.

The patterns that consistently outperformed a blank box in usability tests:

- Inline suggestions, contextual to the screen. Not "what can I help with?" but "Summarise this document. Translate this section. Find similar projects." — the three things 80% of users on this screen actually want.

- Slash commands. Power users want speed.

/summarise,/translate,/findare faster than typing a sentence and remembered better. - Recent prompts. Surface the user's last 3–5 prompts inline. Repeat usage is enormous in real telemetry.

- Templated prompts with editable slots. "Generate a [status report] for [project] covering [period]." Click each slot, adjust, run.

The blank box is the worst-performing pattern by every metric we've measured. It also happens to be the easiest to ship. That's not a coincidence.

2. Previews: show the work, don't make them wait for it

When the model is generating, the user is staring at the screen. The temptation is to fill that gap with a spinner, or worse, a fake "thinking…" animation that lies about what's happening. Both are worse than the alternative.

What works:

- Stream the output. Even if the streamed text is provisional, users prefer it to a black box. The empty-screen-to-output gap should never be more than 400ms.

- Show a structured skeleton first. If you know the output is going to be a 5-section document, show the headings as soon as you have them and stream content under each. The perceived latency drops by half.

- Show what's being retrieved. "Searching across [project A, project B, project C]" with a live ticker. Users trust visible work; they distrust silence.

- Let them stop and steer. A "stop" button isn't optional. A "regenerate this section" button changes the product.

Same total latency. ~40% lift in user-rated quality. The output literally didn't change. — On switching from a 6-second silent wait to a 6-second streaming preview

The single biggest UX upgrade we shipped on a recent build was switching from a 6-second silent wait to a 6-second streaming preview with the retrieved sources visible. Same total latency. ~40% lift in user-rated quality. The output literally didn't change.

3. Trust: earned by the third interaction

In our usability sessions, the moment the user decides whether the AI is "good" happens around interaction three, not interaction one. Interactions one and two are exploratory; interaction three is the test. If it fails interaction three, they don't come back.

What earns trust by interaction three:

- Citations on every claim. Not at the bottom, not in a tooltip — inline, clickable, on the actual sentence. The user should be able to verify any non-trivial claim in one click.

- Confidence calibration. When the model is unsure, say so. "I'm confident about A and B, less sure about C." Users find this dramatically more trustworthy than uniform confidence on everything.

- Showing what was excluded. "I looked at 14 documents but didn't include 9 because they were outdated." This is rare in production but it's the single best trust signal we've seen.

- Failure modes that admit failure. When the model can't help, "I don't know" beats "Here's a plausible-sounding wrong answer" by a mile. Yet many products still ship the latter.

What loses trust by interaction three:

- A confidently wrong answer with no caveat.

- A formatting glitch in the output.

- A 12-second wait with no streaming.

- An action taken on the user's data without a confirmation step.

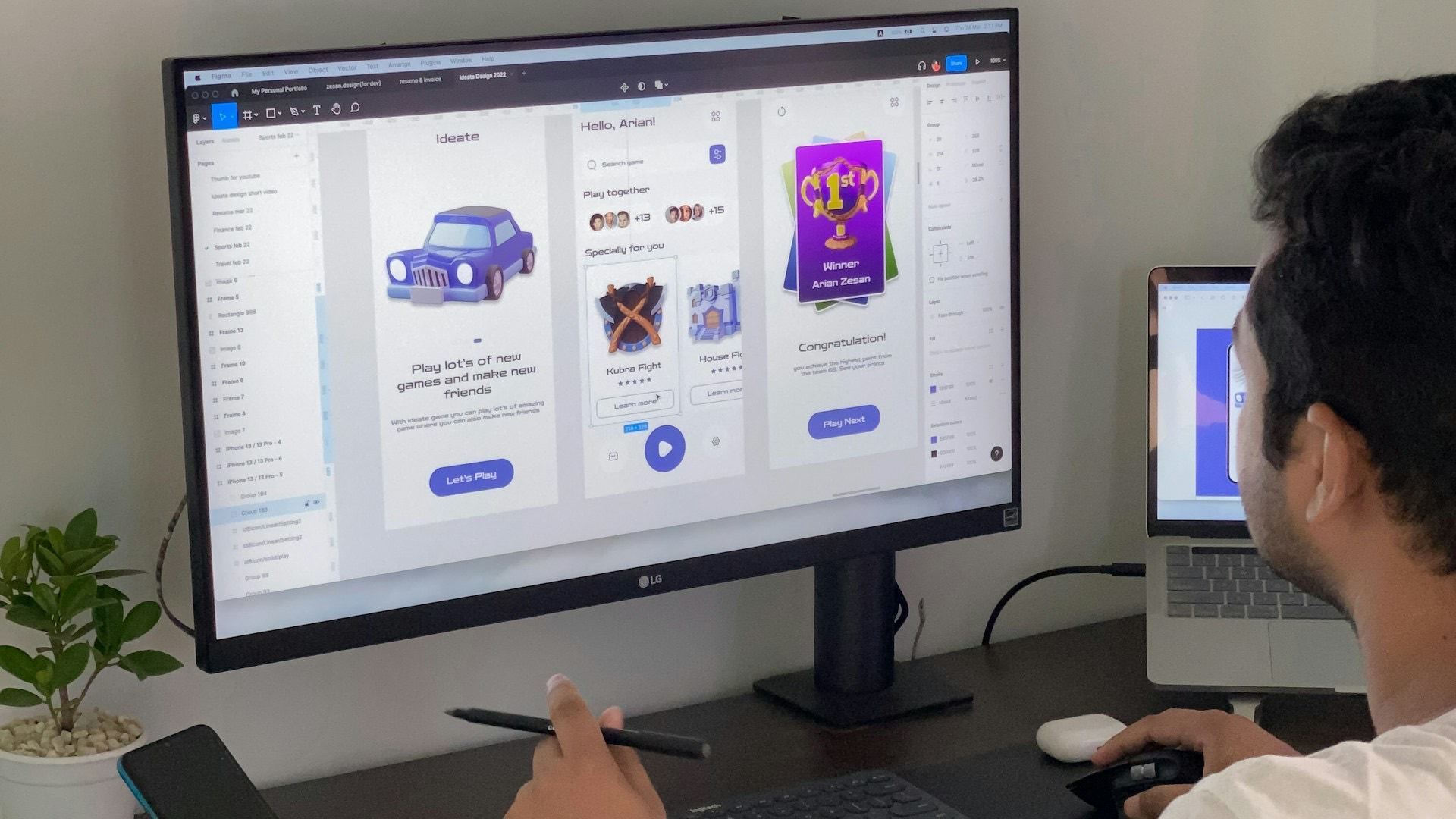

4. The "AI-native" shift: AI in the surface, not next to it

The chat-sidebar pattern peaked in 2024. Products that win in 2026 put AI inside the surface the user is already on:

- A document editor where the AI lives in the gutter, not in a separate panel.

- A spreadsheet where formulas can be expressed in plain language and inline-replaced.

- A risk register where the AI flags the row in red, with a one-click "explain" — not a separate dashboard.

- A search box that returns answers and sources, in the same scroll.

In Vero, the AI is everywhere — risk surfacing, traceability links, automatic document generation, semantic search — but there's no chat sidebar at all. Users don't talk to the AI; they use AI-augmented features. (More on the architecture behind this in Why we built an AI-native PMO.)

5. The patterns to retire

For completeness, the patterns we'd argue against shipping in 2026:

- The sparkle icon as primary navigation. Tells the user there's "AI somewhere." Tells them nothing useful.

- The full-screen modal chat. Forces the user out of their work. Almost always the wrong choice.

- The "magic wand" with no preview. Click → wait → result. No explanation, no edit. Users distrust it within two uses.

- The thumbs-up / thumbs-down with no follow-up. Useless feedback signal. If you ask, ask why.

The summary, on one line

Show suggestions instead of a blank box. Stream previews instead of spinners. Cite sources instead of asserting. Suggest actions instead of executing them. Earn trust by the third interaction, or lose the user.

That's most of what we know.